[Image above] No more smudged screens once you can operate your phone hands-free. Credit: Made by Google, YouTube

When it comes to Marvel technology, hands down my favorite device is the holographic interface Tony Stark uses to research and design all his creations.

With just a swipe of his hand, Stark can make his screens expand, rotate, and scroll, actions that in real life require us to touch a physical screen.

At this point, creating holographic interfaces is still out of reach—we will have to be content with physical screens. However, if the new sensor Google is developing achieves its goals, touching screens to operate them may no longer be necessary.

Tony Stark is lucky he does not need to touch screens to operate them, considering how often his hands are covered in food residue. Credit: RED Lion Movie Shorts, YouTube

The Soli sensor is a radar sensor, meaning it uses electromagnetic waves to gather information. Radar sensors are not new (when was the last time you got caught speeding?). Soli is unique from other radar sensors, though, for a few reasons that Google explains on the project webpage.

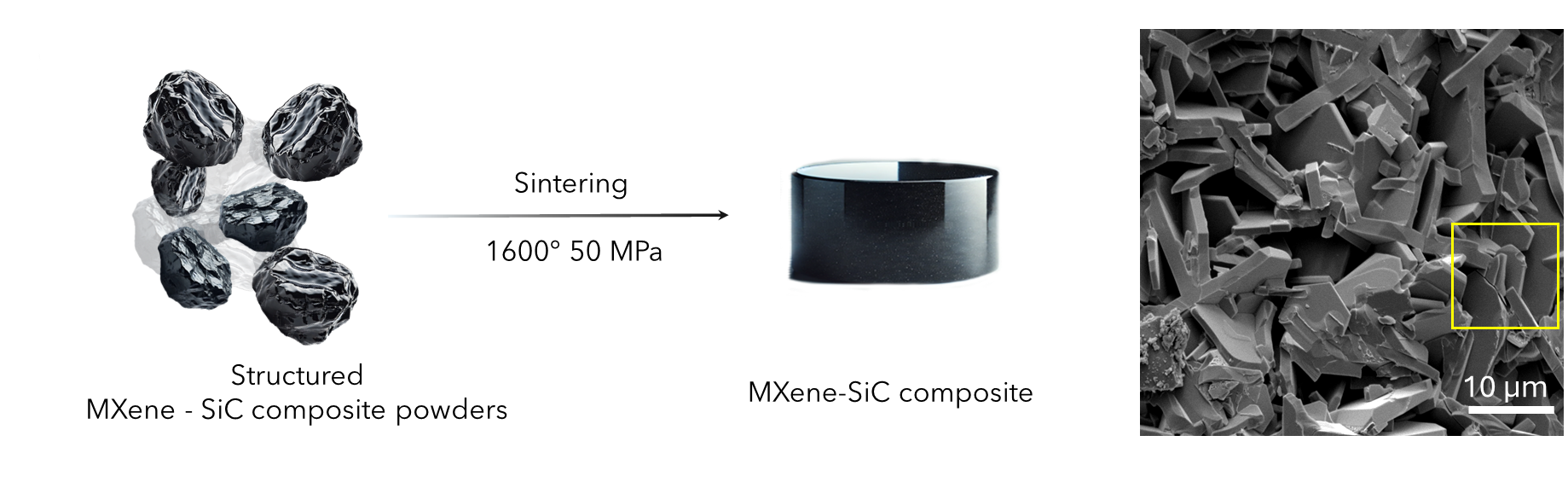

The Soli chip reduces radar system design complexity and power consumption through use of two modulation architectures: a frequency modulated continuous wave (FMCW) radar and a direct-sequence spread spectrum (DSSS) radar. Together, these chips integrate the entire radar system into a single, ultra-compact 8 mm x 10 mm package, which also contains multiple beamforming antennas that enable 3D tracking and imaging.

The Soli software development kit (SDK) enables developers to easily access and build upon Google’s generalized gesture recognition pipeline. The pipeline works by implementing several stages of signal abstraction, including:

- raw radar data to signal transformations,

- core and abstract machine learning features,

- detection and tracking,

- gesture probabilities, and

- UI tools to interpret gesture controls.

Google publicly launched Project Soli in 2015 with a 4-minute video (shown below) posted to their Advanced Technology and Projects (ATAP) group YouTube page.

Credit: Google ATAP, YouTube

On January 1 this year, Reuters reported that the Federal Communications Commission (FCC) granted Google a waiver to operate Soli sensors at frequencies of 57–64 GHz, which is higher than the frequency range FCC traditionally permits. Google requested use of this higher frequency range last March because it claims Soli is not as accurate in the lower frequency range. The higher frequency range is still in line with limits dictated by the European Telecommunications Standards Institute.

When the FCC waiver news first broke, many believed it would take quite some time before Soli was installed on Pixels and Chromebooks. However, the recent announcement by Google that Soli will be installed on the Pixel 4 releasing this fall means we may see the technology in just a few short months!

In a promo video for the Pixel 4, Google shows the phone unlocking when it senses an approaching face and skipping through songs at the wave of a hand.

Many comments below the promo video compare the Soli technology to the “Air Gesture” feature on the Samsung Galaxy S4. However, Air Gesture uses infrared rather than radar sensors to detect motion, a difference that could make Soli technology more accurate.

Update 09/16/2020 – Google has removed the original video from their YouTube channel. View a copy of the original video here.

Credit: Made by Google, YouTube

The fact Google has already told us so much about the Pixel 4 before its official release is highly unusual. The Verge executive editor Dieter Bohn explains in the video below what Google may hope to gain from publicly releasing this information so soon.

Credit: The Verge, YouTube

Author

Lisa McDonald

CTT Categories

- Electronics

- Material Innovations