[Image above] NIST released its report from May’s MGI workshop, “Building the Materials Innovation Infrastructure: Data and Standards.” Credit: OSTP.

The stated goal of the Materials Genome Initiative (pdf) is “to double the speed at which we discover, develop and manufacture new materials.” The goals are clear, but how to tackle them is challenging. MGI will draw on the concerted efforts of academia, manufacturers, federal funding agencies and national labs. Meanwhile, each of those constituencies must remain true to their missions, and there are often dependencies between them, for example, between academia and federal funding agencies.

Also, as MGI’s White House point man, Cyrus Wadia, explained in this interview with us last year, the idea is for MGI to evolve in a grass roots manner, not bureaucratically in a top-down way. Since it was announced in June 2011, the MGI has transitioned from a twinkle in the eye of the White House OSTP to a multi-agency initiative taking its first toddling steps. And, indeed, with the first drops from the funding tap flowing from diverse funding agencies, it looks like the concept is working.

Even so, getting the materials science community’s collective arms around MGI is not so easy. NIST, as the nation’s data and standards experts, are naturally positioned to take a leadership role in defining the issues and guiding the development of a “materials innovation infrastructure.”

NIST embraced that challenge/opportunity and last May convened a workshop—”Building the Materials Innovation Infrastructure: Data and Standards”—to help define the “cross-cutting and domain-specific data challenges” that need to be overcome.

The workshop convened 125 stakeholders from academia, federal agencies, national labs, industry and professional societies. Most, although not all, participants came from United States organizations. In November, the agency released its summary report summarizing the outcome of the exercise to evaluate the status of the Materials Innovation Infrastructure and identify gaps and opportunities. It also outlines the process the group used to attack the issue.

Lead author of the report and NIST scientist, Jim Warren explained in a phone interview that the MII is “about lowering the barrier to entry for manufacturers” to accelerate materials innovation. He says some disciplines are way ahead on developing a data infrastructure, for example, with regard to data sharing and quality, computer codes, metrics, etc. “We want to harvest the best practices and use them where it makes sense for materials science,” he says.

Warren likens the coalescence of the MII to the birth and growth of the Internet “superhighway.” He says, “We are all willing to pay a small cost for access to the Internet, which makes our life better. The MII imagines something similar for materials data.”

Another way the MII compares to an established infrastructure model is the US roads and highways system. Some roads are owned at the federal level, some at the state or local level and some other roads are private. Envision the emerging MII as having a similar mix of owners, access points, etc.

At the workshop, the participants were charged with assessing data infrastructure in four areas: data representation and interoperability, data management, data quality and data usability. To provide a framework for the discussion, participants considered the four areas in the context of two broad topics: length scale challenges and technical applications.

Length scale challenges fall into two categories: challenges relating to the mathematics of the scale and crossing regimes, and challenges relating to the computing power needed to perform the calculations.

For example, phase field methods are used to model microstructure development in the nanometer to micron range. However, microstructure development arguably can be modeled also at the crystal lattice scale with approaches such as density functional theory. Finding the mathematics that transitions between them is something like finding a clutch that can shift between first gear and fourth gear.

Also, it does not take long to peg the computing power, according to Warren. Say, for example, you want to model one millimeter of a solidification interface. By modeling conditions every 10 angstroms, normal to and along the interface, the computation very quickly generates terabytes of data.

The workshop participants divided length scales into different regimes: macro, micro, nano and molecular lengths, and atomic lengths. Within each of these, challenges were prioritized and categorized as short-term or long-term, based on whether their impacts could make a difference in less than five years or more than five years. For example, in the area of data representation and interoperability, participants identified the “definition of data or metadata for particular applications with standards for software to facilitate linkage,” as both a high priority and something achievable in the short term.

The organizers of the workshop also recognized that new materials developed in the MGI construct will be developed with specific applications in mind. Thus, they considered four possible “technical application areas”: electrochemical storage, high-temperature alloys, catalysis and lightweight structural materials. They selected these TAAs because, broadly speaking, they represent areas that are positioned well to adapt an MGI approach. Warren notes, however, that the four TAAs are only representative examples, and workshop organizers and participants acknowledge that there are many other applications that could have been considered instead.

In both contexts, the key metric considered was the amount of time that could be saved if the data challenges were eliminated; cost issues were not addressed directly.

Finally, the report calls out cross-cutting challenges that impact anybody involved in materials design, such as community leadership, data sharing, computational validation, etc.

According to Warren, MGI leaders are optimistic that the initiative will begin to yield benefits as early as 2013, with the rollout of prototype solutions and virtual communities. Contact Warren via email to learn more about MGI and related activities.

Author

Eileen De Guire

CTT Categories

- Basic Science

- Manufacturing

- Modeling & Simulation

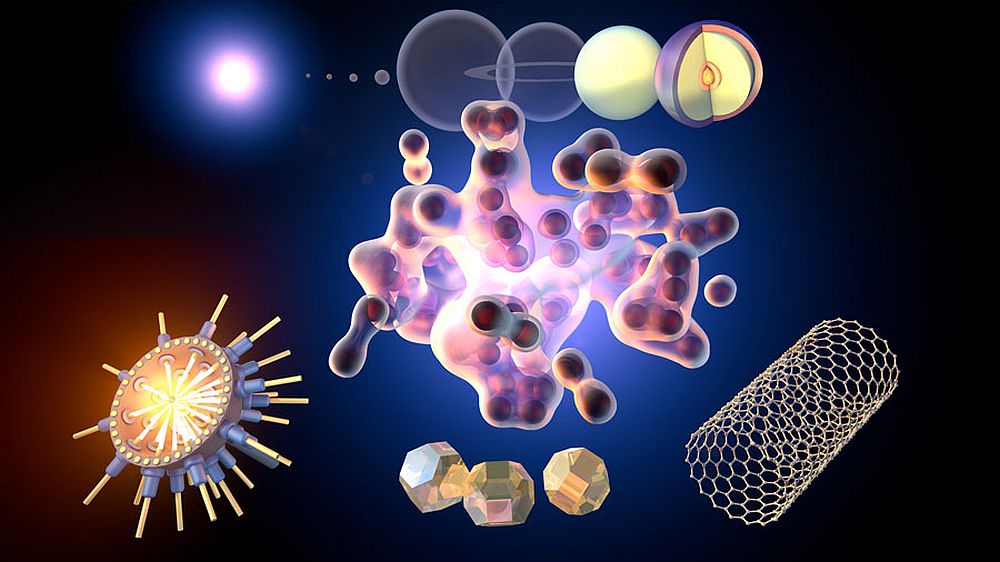

- Nanomaterials