[Image above] A new research initiative at Lehigh University is taking the human element into account in its quest to evolve how we analyze data. Credit: Lehigh University; YouTube

Scientifically speaking, we’re living in some pretty sweet times.

There are a whole host of high-tech analytical techniques available today to explore nanomaterials, often deciphering structure and composition atom by atom.

While these analysis techniques offer incredible power, they also tend to generate incredible amounts of data—terabytes of data per experiment.

One challenge of all this data is simply storage—whether via interesting innovations such as nanostructured glass disks or holographic nanoparticle thin films.

But in addition to the simple challenges of data storage, another persistent and increasing challenge remains—how do we analyze and interpret such vast quantities of data in a way that yields meaningful information?

Because even if we generate piles and piles of data, those piles are as useless as piles of garbage (actually, more useless than piles of garbage) if we can’t make sense of the data.

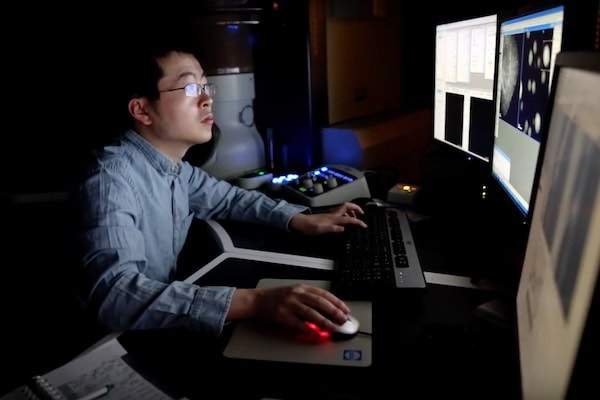

The scientific techniques and capabilities of our experimentation and equipment has come so far that the scales have tipped against us humans—our brains are now often the limiting factor in analyzing and interpreting our experiments.

So in an effort to develop more intelligent data analysis to drive informed nanomaterials selection, a unique research initiative at Lehigh University (Pa.) is taking the human element into account in its quest to evolve how we analyze data.

Led by ACerS Distinguished Lifetime Member Martin Harmer, the Nano/Human Interface Presidential Engineering Research Initiative is rather unique in its emphasis on the human element of data.

“The Nano/Human Interface initiative emphasizes the human because the successful development of new tools for data visualization and manipulation must necessarily include a consideration of the cognitive strengths and limitations of the scientist,” according to a Lehigh press release.

At its core, the initiative is looking to completely alter how we interact with and experience data—in a way that allows us to make more informed sense of nanomaterials data.

Learn more in the short video below from Lehigh University.

Credit: Lehigh University, YouTube

And now, as a proof of the its potential, the relatively recent research initiative now has its first published paper. That paper, published in npj Computational Materials, demonstrates a technique to map multidimensional material properties relationships using data analytic methods and a visualization strategy called parallel coordinates.

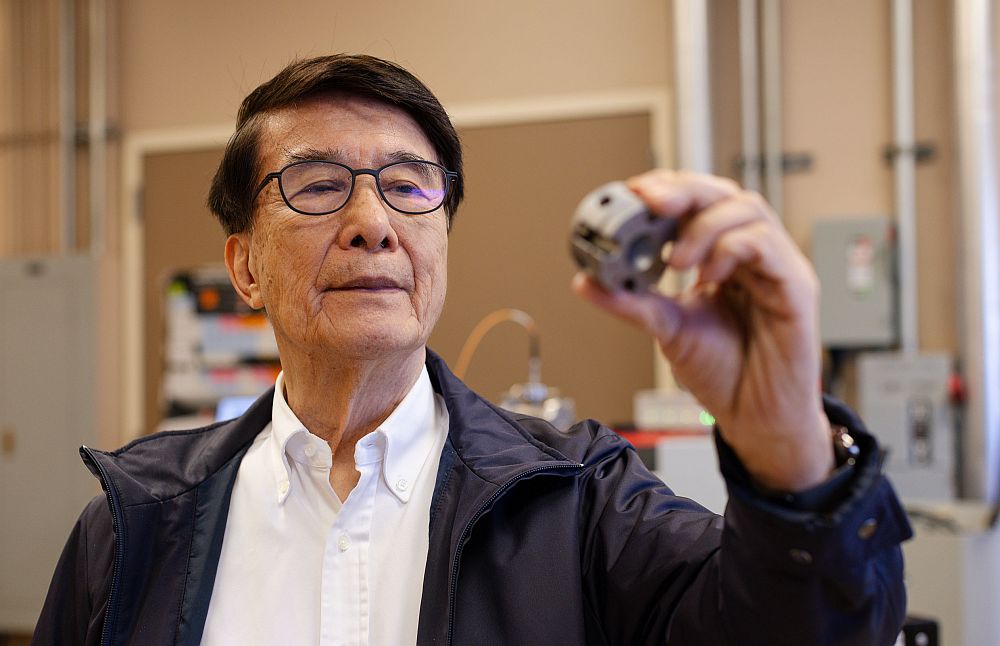

According to the paper’s author, Jeffrey Rickman—who is a professor of materials science and engineering, in addition to physics, at Lehigh—“In the paper, we illustrate the utility of this approach by providing a quantitative way to compare metallic and ceramic properties—though the approach could be applied to any materials you want to compare.”

In the paper, Rickman shows how parallel coordinates can help researchers tackle the complexity of analyzing a combination of nanomaterials properties, which can complicate scientists’ ability to identify patterns when visualizing data.

“If plotting points in two dimensions using X and Y axes, you might see clusters of points and that would tell you something or provide a clue that the materials might share some attributes,” he explains. “But what if the clusters are in 100 dimensions?”

The power of Rickman’s technique is that parallel coordinates can help eliminate those dimensions that are non-relevant, reducing “noise” in nanomaterials data—and subsequently illuminating significant and unique property correlations within the data.

Ultimately, the hope is that such ability to identify and interpret the most meaningful data will guide development of materials by design.

The paper, published in npj Computational Materials, is “Data analytics and parallel-coordinate materials property charts” (DOI: 10.1038/s41524-017-0061-8).

Did you find this article interesting? Subscribe to the Ceramic Tech Today newsletter to continue to read more articles about the latest news in the ceramic and glass industry! Visit this link to get started.

Author

April Gocha

CTT Categories

- Basic Science

- Education

- Material Innovations

- Modeling & Simulation

- Nanomaterials