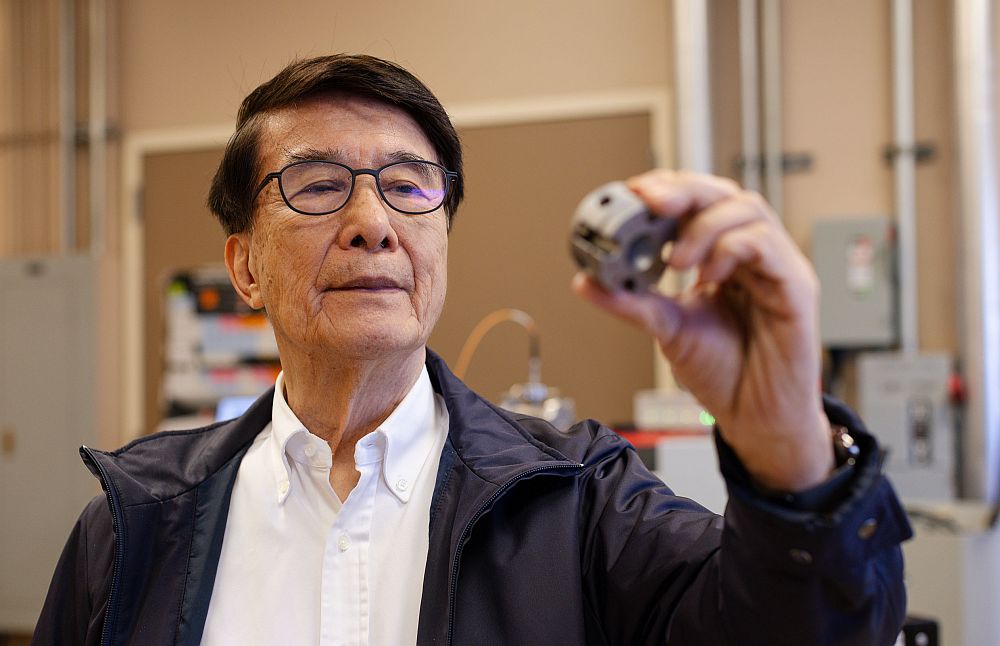

[Image above] Kismet, a 1990s-era robot made at Massachusetts Institute of Technology has auditory, visual, and facial systems to demonstrate simulated human emotion and appearance. Credit: Jared C. Benedict; Wikimedia.

The holiday season tends to draw out emotion and messages about emotion-peace, joy, love, happiness, gratitude. For many, negative emotions also creep into the holiday season-disappointment, anger, sorrow, grief, guilt. Sound familiar?

The ability to emote is part of the human character, one element of what it means to be human. Some of us have an acute ability to feel and express emotion; others, such as those on the autism spectrum, have less. We watch each other closely to read emotional clues and react in response. If you are a parent, you know how a baby’s first smile swells your heart, for example.

Most of us also know how easy it is to “read” an emotion incorrectly. Haven’t we all had the experience of misinterpreting the emotional signals of a spouse, parent, child, friend, or coworker? And, sometimes, we direct our emotions at nonliving things, like computers, smartphones, and ATMs—”Why won’t you do what I want!”—as though they could respond.

While understanding emotional intelligence usually is thought of as the domain of brain specialists like psychologists and cognitive scientists, the artificial intelligence community is thinking about emotion, too. It’s one thing to program a robot to follow Boolean logic: If this input, then do this output; if this OR that, then do this AND that. But, can a machine be coded for the grey areas: If happy, then do this; if angry, then do that; if sad, then do this until the sadness goes away. How do you program a computer to know the difference between “sadness” and “absence of sadness?”

Ray Kurzweil, the coding mind behind computer voice recognition and optical text scanning, thinks that computers with emotional intelligence are not only possible, but also achievable in a 20-year timeframe. His new book on the topic, released just in time for the holidays, is “How to Create a Mind.” He’s written before on similar topics, including the best-seller, “The Singularity Is Near: When Humans Transcend Biology.” In his new book (according to the review I read), he thinks it is possible that scientists will have reverse engineered the brain and will be able construct a machine with the functionality of a brain, complete with self-awareness, consciousness, and a send of humor. He thinks it will be possible to code the artificial brains to have emotion, too.

The review of Kurzweil’s book reminded me of one of the most interesting books I have read, “Crashing Through—A True Story of Risk, Adventure, and The Man Who Dared to See,” by Robert Kurson. It is the story of Michael May who lost his eyesight as a preschooler in a chemical spill accident. The corneas of both eyes were destroyed, rendering him completely blind, but the rest of the mechanics of his eyesight, such as the optic nerve and retina, were undamaged. Forty-three years later in 1999, he had his sight restored with a stem cell transplant, giving doctors and scientists a unique opportunity to study the connection between the brain and vision. The story of how he learned to see offers a rare glimpse into the brain and how it processes visual information, which would include emotion. (Look here to get an idea of what he “sees.”)

Because May was so young when he lost his vision, he had no patterning that told his brain how to interpret images—he had to learn how to “see” everything. There is a memorable passage where he goes to a popular coffee shop to learn how to categorize people, and he has his wife coach him on how to distinguish between the faces of men and women. He is frustrated by how often he guesses wrongly. Through touch, he could determine gender instantly, but depending only eyesight, the differences were too slight to sort out. His wife coached him in some other areas, too, like whether a person would be considered attractive or not.

May struggled to teach his brain to, basically, engage in the kind of pattern recognition Kurzweil wants to program computers to do. May is an intelligent and successful businessman with all the requisite base information: He already knew the genders, he already knew the spectrum of possible human emotion, he already knew how to respond to emotion in others—and he had the human intelligence to make leaps and connections across types of information. If it was difficult for him to learn how to tell the difference between a man’s face and a woman’s face, it would seem that the visual clues distinguishing between emotions are probably orders of magnitude more subtle from a training or coding perspective. For example, how different are the facial expressions for frustration and grief, or perhaps a grimace and a polite smile? Not much.

MIT computer science professor, Rosalind Picard, agrees. A new field of computer science has emerged around the artificial intelligence of emotion called “affective computing,” and Picard is at the vanguard of those studying it. In fact, it was she who coined the term in her book, “Affective Computing.” An NSF newsletter highlighting her work observes that affective technologies could be welcome enablers for those who struggle to interact with the world, such as people with autism, or even blindness.

However, as she points out in this TEDx talk, programming for emotion recognition is extremely complicated because emotions are shared in a complicated, nonverbal language. She describes the computer science involved as an “extraordinarily complex problem that make chess and “Go” look trivial.” We find out why at about the 16-minute mark in the talk,

With over 10,000 facial expressions that can be communicated and change every second, multiplied by the faces taking part in the conversation and the social games people play, it’s probably the hardest computer science problem to solve in the next century as we go forth. The complexity is just astronomical.

So, for the foreseeable future, we will be on our own to evaluate the emotional communication we undertake every human interaction. Thank goodness.

Author

Eileen De Guire

CTT Categories

- Basic Science

- Modeling & Simulation

- Optics